When i was started to work the Elastic Search with PDI based on the following link

http://pedroalves-bi.blogspot.co.uk/2011/07/elasticsearch-kettle-and-ctools.html?m=1,

I

have been faced many issues and while trying to debug those issues, I could

not find much information/support from anybody. Therefore this blog

describes the insertion of bulk data to Elastic Search engine using

Elastic Search Bulk Insert object on kettle and integrates the output

of Kettle with CDA.

Currently, it is not possible to run the Pentaho with higher version of Elastic Search e.g. 0.90.5. The main reason of it is that PDI components has been compiled with 0.16.3 classes.

Prerequisite :

Currently, it is not possible to run the Pentaho with higher version of Elastic Search e.g. 0.90.5. The main reason of it is that PDI components has been compiled with 0.16.3 classes.

Prerequisite :

- Elastic Search engine - ES 0.19.5

- Pentaho BA Server - 4.8.0 GA

- Kettle - 4.4

- Download ES ver. 0.19.5 fromhttp://www.elasticsearch.org/downloads/0-19-5/

- Extract the elasticsearch-0.19.5.tar file under the usr/share directory.

- Navigate to usr/share directory and issues the below command,

- $ elasticsearch-0.19.5/bin/plugin -install mobz/elasticsearch-head

- Navigate to usr/share/elasticsearch-0.19.5 directory and issues the below command to start the ES

- $ bin/elasticsearch or bin/elasticsearch -f

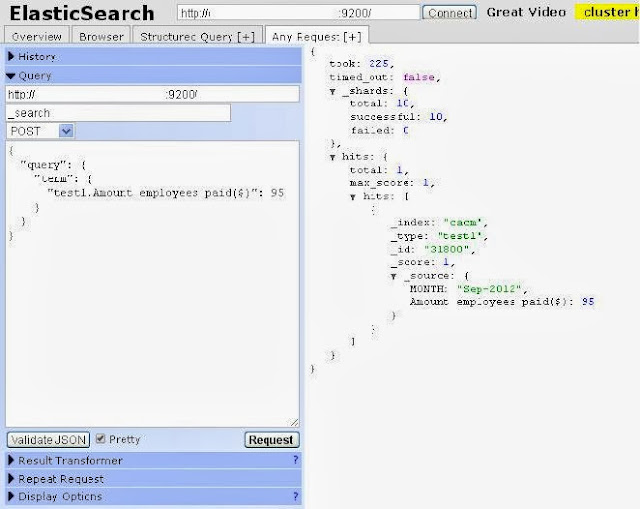

- open http://localhost:9200/_plugin/head/

Inserting the Bulk data to ES on Kettle Transformation :

- Create a Transformation (Table input -> Elastic Search Bulk

Insert)

- Copy the elasticsearch* and lucene* jars from 0.19.5 ES server/lib to .../design-tools/data-integration/lib/elasticsearch directory.

- Copy the attached jar file (es_0.19.4_patch.jar) into PDI/lib

- Download Patch file : https://sites.google.com/site/filecabinkkarthik21bigdata/uploadfile/es_0.19.4_patch.jar?attredirects=0&d=1

- Restart the PDI

- In Elastic Search Bulk Insert object, Provide the IP address and Port number of the Elastic Search Engine on Servers tab.

- Note : You need to select the value for ID Field.

- Click the Test Connection, you could see the below screen-shot which means PDI is connected to ES.

- Run the Transformation. It inserts the bulk data to the ES engine.

Hey !!

ReplyDeleteThanks for the great blog. Can you please let me know if it supports the higher versions of Es now. For example 1.4.2. If yes, where can we find the jar files ?

Thanks,

Santosh

goruntulu show

ReplyDeleteücretli

GYEİ8